“WHAT ARE YOU DOING? Have you started a garden? Are you helping to win the war? Everybody must work and work hard. Soldiers and sailors cannot fight without the help of the rest of us…When the count is made, on the roll of National Service, will you be PLUS one, or MINUS one?”[1]

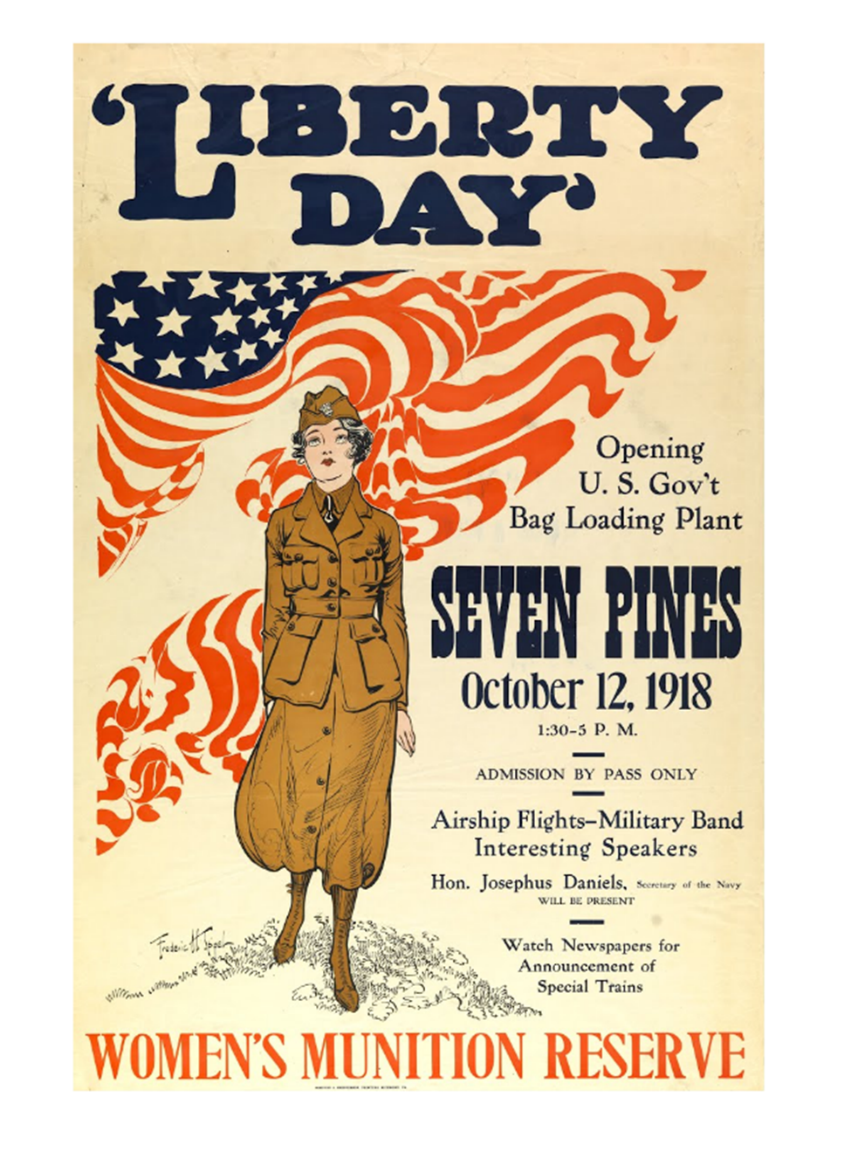

American men fought, but the entire nation went to war in World War I. War altered all aspects of American life. Some civil liberties like freedom of the press were restricted in the name of national security. The draft created soldiers out of male citizens. The federal government gained unprecedented oversight over domestic production, foreign trade, and other economic areas that impacted mobilization and maintenance of the war effort. Defeating Germany required the work and sacrifices of every American- a charge they were reminded of at every turn.

Originally intended to be a go-between between the government and the press, George Creel’s Committee of Public Information soon turned to spreading the gospel of patriotism. The passage above is typical for media during the time; what is a bit atypical (at least through modern eyes) is that this article was published in Boy’s Life: The Boy Scouts’ Magazine. The effort to “draw children to military values and service” lead directly to what Dr. Ross F. Collins termed the “militarization of American childhood.”[2]

Magazines such as Boy’s Life, St. Nicholas Magazine for Boys and Girls, and American Boy linked children’s activities directly to the success of the soldiers fighting overseas. They encouraged planting gardens, buying victory bonds, volunteering for the American Red Cross, and other activities. Idle American hands were not just the devil’s workshop, but the enemy’s. The magazines depicted war as a patriotic and heroic duty, soldiers as valiant adventurers, and men, women, and children back home as the first line of national defense in their absence.

Even vocabulary and syntax changed to reflect the urgency of the message. Sentences became shorter and often took on second-person plural tense, something unheard of in today’s more detached third-person journalistic style. For example, an article on pest control in home gardens begins, “The war is on. You have enlisted as a gardener…Mobilize your forces. Get a store of ammunition (arsenate of lead and the other poisons), get a machine gun or two (hand sprayers), and post your guards…”[3] Children listened: growing, saving, doing, and doing without as directed. An article entitled “How the Boys Scouts Help in the War” even described a group of boy scouts who created and volunteered for service at home “to protect their mothers and sisters” in the Boy Scout Emergency Coast Patrol.[4]

Of course, most of American children’s education in war did not come from their voluntary consumption of popular media. Wholesale militarization could only be achieved through compulsory education on the virtues of war. The lessons in patriotism and civic duty taught at home were reinforced at school. A former educator himself, President Woodrow Wilson understood the power of the captive audience of a classroom. The CPI began publishing a bimonthly newsletter, National School Service, to instruct teachers on how to teach the war, and more importantly, to emphasize the importance of every citizen’s support, regardless of age. “There may be those who have doubts as to what their duty in this crisis is,” wrote Herbert Hoover in the inaugural edition of the newsletter, “but the teachers cannot be of them.”[5] T

he newsletter urged teachers to encourage their students to participate in many of the activities celebrated in the aforementioned children’s magazines. “War savings stamps, food and fuel economy, the Red Cross, (and) the Liberty Loan, are not intrusions on school work,” explained one article. “They are unique opportunities to enrich and test not knowledge, but the supreme lesson of intelligent and unselfish service.”[6]This idea dovetailed neatly with the president’s belief in the subjugation of individual agendas and gains to those of society as a whole, the need for the interest of every citizen to be “consciously linked with the interest of his fellow citizens, (his) sense of duty broadened to the scope of public service.”[7] Like their students, teachers listened, but they faced a stiff penalty if they refused. “Teachers who remained neutral concerning patriotism could be fired, as ten were in New York City, of hundreds in many incidents across the nation,” explained Collins in Children, War, and Propaganda.[8]

“It is not the object of this periodical to carry the war into the schools. It is there already…There can be but one supreme passion for our America; it is the passion for justice and right…for a world free and unfearful,” explained Guy Stanton Ford, director of Civic and Educational Publications for the CPI.[9] Wilson echoed Ford’s ideas, saying “it is not an army that we must shape and train for war; it is a nation.”[10] War mobilization militarized every aspect of American life; childhood was no exception. “Children were exhorted to sacrifice individuality for the group and pleasure for work; in essence, to take on the previous ‘adult’ role of responsible worker,” wrote Andrea McKenzie in “The Children’s’ Crusade: American Children Writing War.”[11] Once this facet of innocence was lost, it was not and could not be restored. Children did not fight in World War I, but their childhood experiences would shape their response when their nation called them to the front lines in the 1940s. The “greatest generation” came of age believing their parents’ war was also theirs. They prepared from childhood to serve in their own.

[1] “How the Boy Scouts Help in the War,” Boy’s Life: The Boy Scout’s Magazine 1, no. 1 (June 1917), 42, Boyslife.org Wayback Machine, accessed October 31, 2016, http://boyslife.org/wayback/.

[2] Ross F. Collins, “This is Your War, Kids: Selling World War I to American Children,” North Dakota State University PubWeb, accessed November 1 2016, https://www.ndsu.edu/pubweb/~rcollins/436history/thisisyourwarkids.pdf, 3; 24.

[3] “The Second Phase of the War,” Boy’s Life: The Boy Scout’s Magazine 1, no. 1 (June 1917), 34, Boyslife.org Wayback Machine, accessed October 31, 2016, http://boyslife.org/wayback/.

[4] “How Boy Scouts Help in the War,” 7.

[5] Herbert Hoover, “Hoover Commends Teachers,” National School Service 1, no. 1 (September 1, 1918), 1, National School Service 1918-1919, Kindle edition.

[6] Guy Stanton Ford, “National School Service,” National School Service 1, no. 1 (September 1, 1918), 8, National School Service 1918-1919, Kindle edition.

[7] Henry A. Turner, “Woodrow Wilson and Public Opinion,” The Public Opinion Quarterly 21, no. 4 (Winter, 1957-1958): 505-520, accessed October 31, 2016, http://www.jstor.org/stable/2746762, 507.

[8] Ross F. Collins, Children, War, and Propaganda, (New York: Peter Lang, Inc., 2011), accessed October 31, 2016, http://www.childrenwarandpropaganda.com.

[9] Ford, “National School Service,” 9.

[10] Woodrow Wilson, quoted in National School Service, 9.

[11] Andrea McKenzie quoted in Ross F. Collins, “This is Your War, Kids: Selling World War I to American Children,” 10.

Sources Cited

Boy’s Life: The Boy Scout’s Magazine 1, no. 1 (June 1917), 42, Boyslife.org Wayback Machine, accessed October 31, 2016, http://boyslife.org/wayback/.

Collins, Ross F. Children, War, and Propaganda, (New York: Peter Lang, Inc., 2011), accessed October 31, 2016, http://www.childrenwarandpropaganda.com.

——“This is Your War, Kids: Selling World War I to American Children,” North Dakota State University PubWeb, accessed November 1 2016, https://www.ndsu.edu/pubweb/~rcollins/436history/thisisyourwarkids.pdf.

National School Service 1, no. 1 (September 1, 1918), 1, National School Service 1918-1919, Kindle edition.

Turner, Henry A. “Woodrow Wilson and Public Opinion,” The Public Opinion Quarterly 21, no. 4 (Winter, 1957-1958): 505-520, accessed October 31, 2016, http://www.jstor.org/stable/2746762.